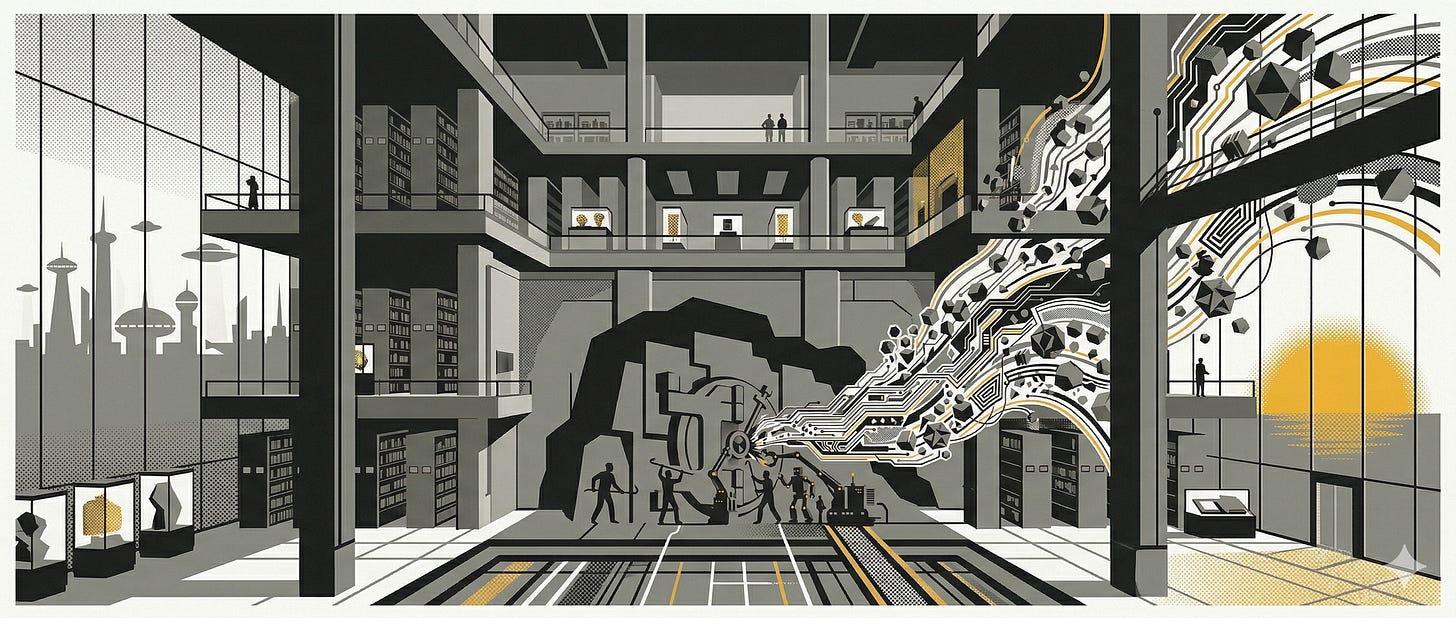

Building the U.S. Data Accelerator

Strengthening the Data Commons to Advance American AI

In order to learn a new skill or develop expertise in a particular area, one must be exposed to certain types of data. In the context of developing artificial intelligence models, training data is the foundational layer of information which it relies on. The more high quality data that is available and used throughout training, the more capable and adaptable the model is across a suite of tasks.

Last week, FAI published The Data Crunch: Accelerating American AI through Government Data Access. The paper describes the importance of strengthening the data commons, a term that describes freely available and accessible data that can be used to train and improve AI models, specifically by making U.S. government data more accessible.

For the past few years, policymakers in Washington have focused most of their attention on policies that impact access to compute. Examples include but are not limited to the CHIPS and Science Act, the Biden Administration’s diffusion rule (now rescinded), Pax Silica, and the Chip Security Act. The focus on this particular input makes sense, because without this cutting-edge hardware, US firms would not be able to build and deploy AI.

But this does not mean that policymakers should neglect other key inputs, particularly data. The moment to enable more access to data is now for two key reasons.

First, the availability of unused, high quality data available on the open web is increasingly scarce. After a few years of training runs and model improvements, data that could provide a step-change in performance is unlikely to be surfaced by re-scraping the available web. There is plenty of data “out there”, but there are barriers to accessing such data, whether that be it is not digitized, it is unstructured, or there is another friction that raises the cost of acquisition.

Second, the deluge of copyright and intellectual property litigation by rightsholders against model developers continues to raise the risks of using copyrighted data under the presumption that such use qualifies as fair use. While a few decisions point to less-than-apocalyptic outcomes for model developers, more than seventy cases are still outstanding. Adding to the uncertainty, just last week Disney and OpenAI severed ties, dissolving the largest deal between an incumbent IP holder and frontier lab over the use of its products to support image and video generation capabilities. The divide between developers and the content industry seems to be widening by the day.

To address these twin problems, our paper proposes leveraging the significant troves of public data and existing federal authority to create the U.S. Data Accelerator. We identify four types of data that would be ideal starting points for the accelerator. They are:

Geological & Geospatial Data

Economic Development and Industrial Capacity Data

Regulatory Cost Data

Medical Device and Drug Trial Data

The types of data we identify have two things in common.

The data is already being collected by a federal agency and is made available in some form.

These types of data complement current capabilities of generative AI models and therefore could enable greater model utility and customization.

The Accelerator is key to unlocking this data because existing platforms for accessing such data are outdated and fit for a different time. Currently, Data.gov is home to more than 360,000 datasets, but due to the fragmentation of different agencies’ data, lack of regular updates, and heterogeneity in metadata and structure its usability for model development is limited. These deficiencies introduce frictions that limit the utility and usability of this data for model developers. Ideally, by establishing a clear, standardized schema and cadence for making such data available the Accelerator can ensure that this data can be used to the fullest extent.

Luckily, there is existing law and policy on the books that legislators and executive branch officials can rely on to advance this idea. The Foundations for Evidence-Based Policymaking Act of 2018 sought to improve federal data access and standardization. Simply put, this law requires agencies to make government data “open by default”, and includes specific expectations for government data assets. This creates the statutory foundation for federal data collection and dissemination to the public. The Office of Management and Budget could issue a memorandum under the powers this legislation vests in it to establish guidance for federal agencies to make publicly available data AI-ready. The Department of Commerce published a report in 2025 on steps taken to ensure publicly available data can support generative AI development and deployment. The insights found within this report could serve as a starting point for future guidance.

Even if there are gaps in this approach, there are willing partners within the legislature and the executive branch who stand ready to help.

On the congressional side, Senator Ted Budd (R-NC) and Senator Andy Kim (D-NJ) have introduced legislation to ensure that federal data can be accessible and useful for AI model development. Their legislation would direct the National Institute of Standards and Technology to collaborate with other relevant agencies and stakeholders to develop standardized schema to maximize the accessibility and utility of public data.

Turning to the executive branch, President Trump’s recently-released National Policy Framework for Artificial Intelligence recognizes the value of government data for model development. In Section V, the framework directs Congress to “provide resources to make federal datasets accessible to industry and academia in AI-ready formats for use in training AI models and systems.” Such language is unambiguous: federal datasets can and should be made accessible to accelerate American AI development.

The U.S. Data Accelerator is about enabling “American Innovation,” but it is also about “Innovating America.” Making more high-quality data available for model developers can support further scaling of existing model architectures and firms, while also creating opportunities for new firms or developers. Strengthening the data commons will help firms of all sizes, but particularly startups and new entrants. Such a project can fuel America’s entrepreneurial engine. But this project can also demonstrate the government’s ability to be an enabler of innovation by provisioning a public benefit. With the legal frameworks and raw resources clearly identified, the next step is building the capacity and will to execute.